Don't Tokenmax—Do This Instead

Dispelling the myth that more tokens = better productivity

There’s been an interesting push recently for “tokenmaxxing”, or the idea that burning through more tokens means an engineer has been more productive. The thought process is more tokens mean more AI use, which means getting more done and saving more time.

In reality, the number of tokens used is a development velocity metric similar to measuring lines of code written: It’s not only inaccurate, but can actually be measuring the opposite: a decrease in development velocity. For more on measuring developer velocity via lines of code, read -2000 Lines of Code.

Measuring tokens used results in a manifestation of Goodhart’s Law: “When a measure becomes a target, it ceases to be a good measure”. This creates a utility gap in what the measurement actually produces: Users prioritize models that burn tokens, redundant context over precise requests, and unnecessary agentic use for small work. Instead of engineers using proper techniques to be more productive with AI tools, they measure their productivity with how high up they are on a token spending leaderboard.

My experience has shown the opposite of tokenmaxxing (which I like to call “tokenminning”) is ideal for high velocity agentic development work. I’ve found my token use to directly correlate with the time I spend on a feature. If my agent is burning tokens, it generally means more time spent by me monitoring and reviewing that agent’s progress. This is directly contrary to the primary goal of agentic development tools: Enable engineers to accomplish more in less time.

To achieve this goal, it’s key to rely less on agent reasoning and ludicrous token spend and instead focus on a structured, well-thought-out engineering process that makes it easier for the coding agent to understand your request and adhere to it.

In this article, I cover topics to help you make your agentic development faster. We’ll go over:

My 3 tips for making agentic development use less of your time.

The tip I think is less helpful than most make it out to be.

A better measurement for agentic development velocity.

If you really want to get deeper into proper agentic development, don’t miss Packt’s Hands-on Spec-Driven Development Workshop coming up on May 14th.

This is a hands-on workshop that teaches you how to be more consistent when building with AI via spec-driven development. You’ll create a real application while learning how to define clear specs, guide AI reliably, and reduce rework.

This is the second cohort for this workshop after the first sold out. There are only 8 spots left. Secure your spot now and get 45% off with code LOG45.

I highly recommend Packt’s education resources so I’m excited to bring this discounted opportunity to you. The above is an affiliate link to help support the newsletter at no extra cost to you.

My top three tips

From working with agents both in and outside of work, here are my top three tips for making your agentic development workflow faster for you. The primary focus of these tips is on saving your time instead of token costs for your company.

Below are also great tips for anyone building agents. Among other things, agents are fundamentally a context engineering problem. When you’re working with AI coding tools, you’re curating that context in real time.

1. Use smarter models

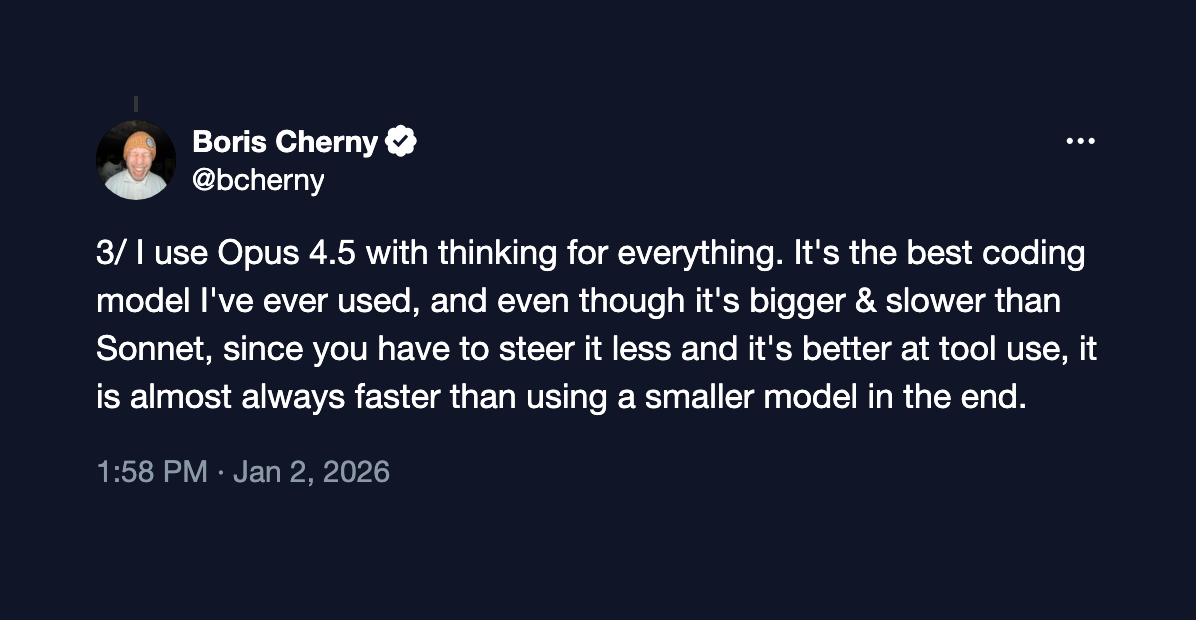

Ever since Boris Cherny explained that he only uses Opus for software development at Anthropic back in January, I’ve been trying to do the same with my own agentic development.

His thesis is using a larger model and spending more per token results in far less overall token use than using a smaller model on the same task. The smaller model requires more steering and rework, resulting in tokenmaxxing and costs more overall in the long run than using the more expensive model per token.

In proper agentic development, I’ve also found this to indeed be the case. Laying out requirements and setting up proper architecture is much more easily done with a more intelligent model with reasoning capabilities. It not only improves spending as mentioned above, it also decreases the time an engineer spends correcting the model. While this might seem contrary to tokenminning philosophy, it actually supports it in the long run.

A tip I’ve frequently seen shared to get better cost-performance from agentic tools is to route models according to the task. The advice to ‘use a smaller model when performing a simple task’ is something I recommend against.

This is a very hard problem space. It’s very difficult to reliably predict which tasks a small model will be able to do on its own and which a larger model is needed for. I’ve used automated model routing for many tasks and can immediately tell when a small model has been prioritized. Even on teams that have the data necessary to make these decisions, model routing is difficult.

I recommend using larger, smarter models whenever possible as the time and cost trade-off in the end is worth it. One exception to this rule is for hobbyist projects. In that case, I recommend using whatever you want. Small models are often good enough in that environment and spending $100/mo on a subscription isn’t worth it.

See my article on that here:

2. Be precise

The biggest downfall of coding agents is that they try to do too much. I’ve seen this manifest in random changes being made to unrelated files when an agent works on a task, an agent going beyond its current work on future tasks without being provided permission to do so, an agent repeating already finished work, and agents running unnecessary CLI commands in the midst of a task.

My biggest quality of life gain has been reining in these models to ensure they aren’t going off the rails. This is a harder task than it seems, but the best way to go about this is to be very precise in your requests to the agent and the environment you set up for them to work in.

Here are the considerations I take to be more precise:

Do some engineering before you start coding. Understand the problem and the code that needs to be written so you can properly direct the agent in the tasks it needs to do. You should know what needs to be done before the agent starts working. If you want to better understand how to do this, check out the workshop above.

Scope your requests to the agent to manageable tasks. Agents tend to go off the rails when you give it too much freedom to interpret implementation details. Be explicit about what you want the agent to do and the changes that need to be made to make that happen. This can’t be done without step 1.

In your requests, try not to make any typos. I’ve found this bit of precision helps an agent understand a request much better.

Manage context based on the task. As context gets larger, the agent has a greater chance of working outside of its scope. This means using separate chats for separate tasks, as the entire chat is passed in each time a message is sent. You don’t want information from a previous task polluting the task you’re completing now.

Be mindful when curating what the agent has access to and continually adjust this as you work. For example, I’ve found some agents to perform poorly when using source control tooling. I’ve removed that agent’s ability to call those tools and I do it myself instead. This also applies to the skills and MCP servers the agent can access which have the potential to pollute context and hurt performance.

3. Write manual code

This one might seem preposterous to anyone else who is chronically online, but, yes, the art of manually writing code is not dead. I’ve seen many people resort to only coding with AI even to the point of prompting for tiny changes.

You’re going to save a lot of your time spent waiting for agents to understand your request if you take care of small changes yourself. It’s often wise to ‘Accept All’ to an agent’s output and make minor tweaks on your own instead of telling the agent what’s wrong and have it do it for you.

Examples of these changes include:

Renaming a variable to be more descriptive.

Simple readability fixes.

Fixing or removing imports.

The only downside to manual coding is that you need to tell the agent you tweaked the code, otherwise, the next change by the agent will end up overwriting your work. If you don’t let the agent know, this can be a serious source of repeated work.

Don’t fall for the “I haven’t written a single line of code” narrative. I don’t know of a single engineer working on large-scale production services that doesn’t write some of the code by hand.

A better metric for agentic engineering velocity

The current best way to measure agentic development velocity is a metric called stickiness or code retention (but I prefer stickiness). This is a measurement of how much AI-generated code lasts without needing to be tweaked and without being overwritten soon after.

This metric is much better measured by AI coding tools to understand their efficiency, but it’s also something engineers should keep in mind when using their tooling. If their AI-generated code is sticky, it’s likely they’re working productively with the AI. If it isn’t sticky, there might be too much rework occurring, which means the above tips should be worked on.

—

Tokenmaxxing is a silly way to measure developer productivity. The most important metric you should maximize with agentic engineering tools is the amount of time you reclaim to work on other things. Tokenminning, in my opinion, is the way to do this.

What tips do you have for faster agentic development? I’d love to hear them in the comments.

If you enjoyed this article, don’t forget to subscribe to AI for Software Engineers to get more just like it in your inbox.

Thanks for reading!

Always be (machine) learning,

Logan

Great tips! Definitely fits my own experience as well.

Just realized I forgot the section on which tip I think is less helpful than most make it out to be!

It's being familiar with your tools. While this is important, I think it's less helpful than these tool-agnostic tips for getting better at agentic coding.