What you should know about AI speculation

Thoughts on software engineering careers looking forward

Even the most talented engineers I know ask me questions about AI because they’re worried about its impact on their career. Most recently, I’ve been asked about the article from Citrini Research titled “THE 2028 GLOBAL INTELLIGENCE CRISIS“ which went viral and rattled markets enough to wipe billions off US-listed firms in a single day.

This article is a thought exercise in how the economy might be impacted by AI in the next two years. The author notes at the start that it’s entirely speculative:

“What follows is a scenario, not a prediction. This isn’t bear porn or AI doomer fan-fiction. The sole intent of this piece is modeling a scenario that’s been relatively underexplored.”

The article is the author’s vision of what could happen in 2028 due to AI. A lot of people have read and shared it under the guise that it will happen simply due to the nature of how information is shared online (a lack of nuance).

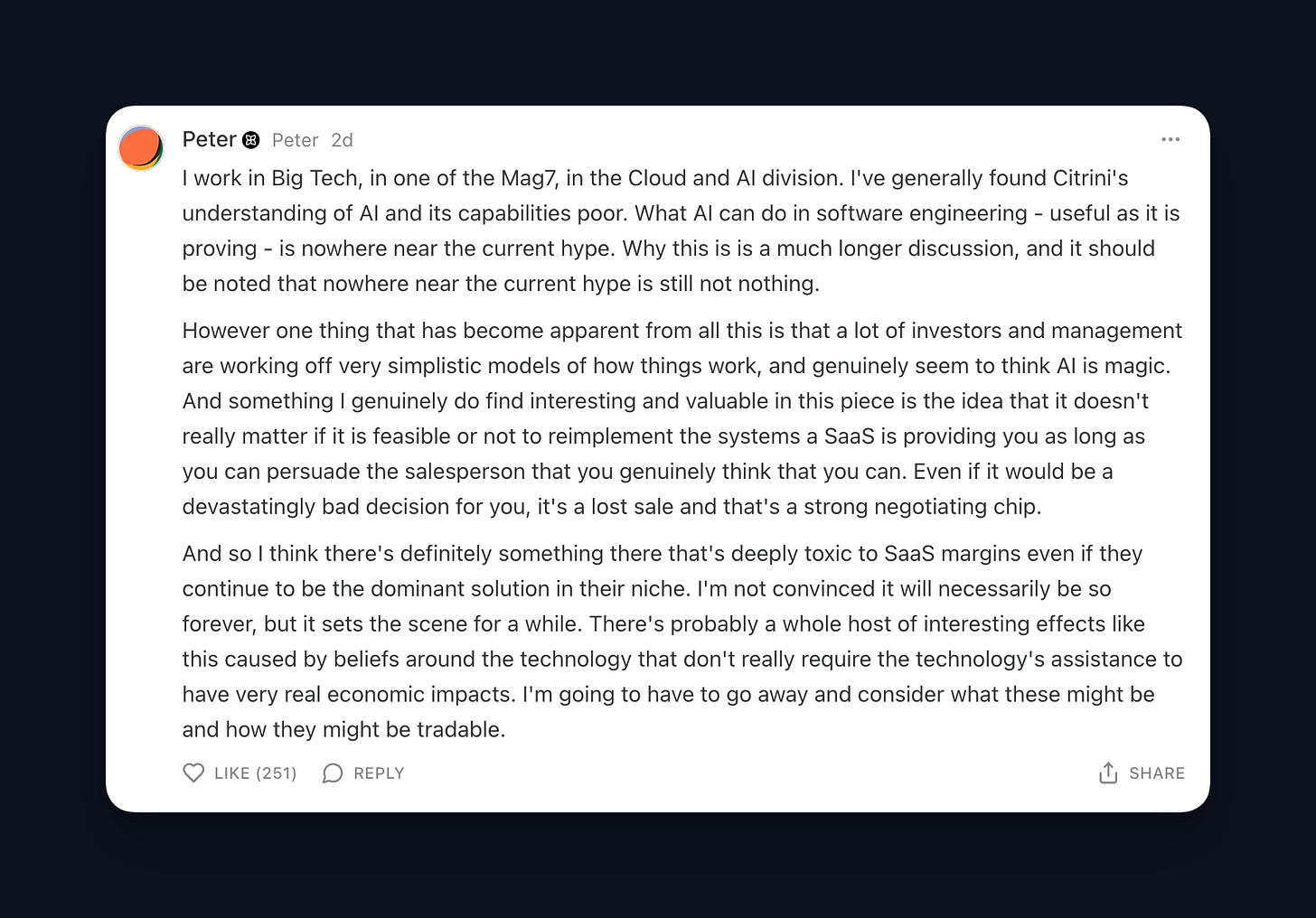

It seems to me that the author doesn’t have a technical understanding of AI or experience building it into real-world systems. This doesn’t mean their thought exercise definitively won’t happen. The future of AI and its impact is very hard to predict.

However, the implausibility of their scenario becomes apparent if you know a few things about the current state of AI and agents in production. There’s a consistent gap between perceived AI capabilities and production reality, and that gap explains most of the doomerism we see online. I’ll share what I’ve learned from working in the space and my opinion on what you should be doing now to prepare for whatever the future holds.

For a really concise tl;dr, read the top comment on the article (pictured above). Below is my opinion of the things you should know to ground your understanding of AI capabilities.

Even area experts can’t make accurate predictions because there’s so much unknown

Estimates for AI impact have consistently been off. Successes have been relatively unseen before they happen. It’s highly unlikely a non-expert will know what’s coming regardless of the claims people make online.

AI impact on software engineering is fundamentally misunderstood by those outside of the industry

In fact, it’s obvious to me who writes software and who doesn’t simply by how they write about AI. The general consensus outside of the industry is that software can now write itself so there’s no need for software engineers or many other jobs now that there’s zero friction for writing new services.

In reality, the friction very much still exists and how good AI is at writing code is nuanced. It’s very good at some things and fails miserably at others. This will improve over time. It’s also highly context-dependent and rarely is the entire context necessary for AI to make a change readily available or provided. Hopefully this will improve over time, but is turning out to be a much more difficult problem to solve.

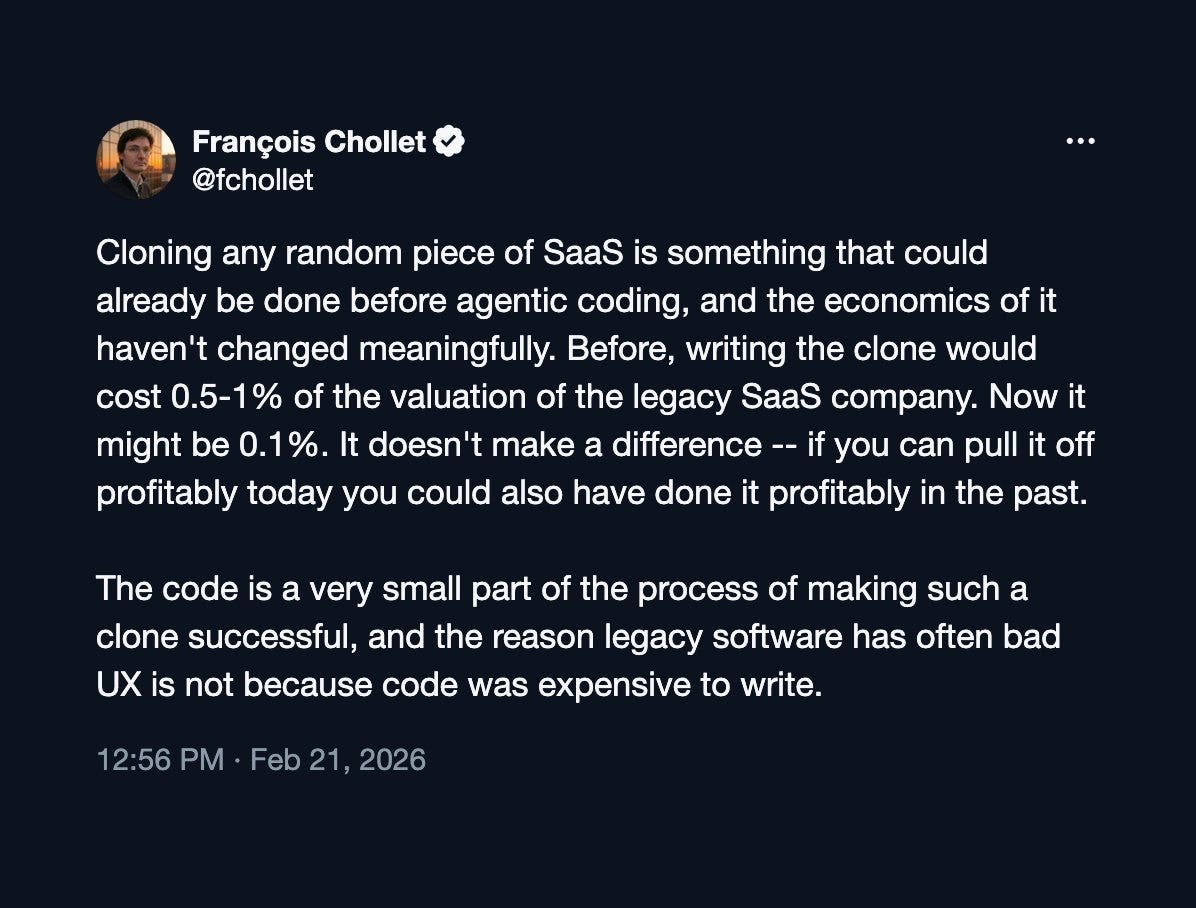

There have been many recent discussions about SaaS (software-as-a-service) being dead because code can be written so easily. There’s much more to writing software than just writing code. In many cases, writing code is the easy part. Deciding what to build, how to build it, and what is worth spending time on are fundamental to successful and efficient engineering.

There’s a hope that agents will be able to more effectively do these things in the future, but the friction for building and working with agents means that’s likely further out. This takes me into my next point.

(If you want to read more about AI’s impact on SaaS, I suggest Francois Chollet’s recent tweets on the subject.)

AI is kinda average

Fundamentally, AI output tends toward the average of its training data. More precisely, a model learns the distribution of what it’s trained on and samples from that distribution. This means it can produce outputs far from the average, but it gravitates toward the generic middle. Pre- and post-training techniques can steer its behavior, but the underlying data is still the most important factor when it comes to AI capabilities.

When you train a model across a large corpus of internet data, the model output will reflect that. This is why AI is mediocre at writing and sub-par at coming up with novel ideas. Reasoning helps with some of this by causing the AI to reflect on and refine its output, but fundamentally that output is average.

This is why the biggest discussion in software engineering currently is the importance of taste. It’s something AI does a poor job with.

Agent capabilities are currently overstated

What matters most for creating production agents is understanding where they consistently fail and mitigating those failures. In production, reliability is paramount.

What we see online is agent success stories because they get the most views. There have been times where those stories have been fabricated or exaggerated. Many of these stories are also one-off examples of something an agent happened to do and not necessarily something they can consistently do.

Agents are useful and capable of many things, but this has caused agent capabilities to be overstated which leads to doomerism and sensationalism online.

A good example of this is the truth behind the joke made about companies saying AGI is around the corner and AI will replace engineers while expanding the hiring of software engineers at the same time.

What should you be doing now?

I don’t have any suggestions in terms of career focus for what software engineers should be doing right now to stay relevant outside of what we’ve always been doing: continuously learning the new, current software engineering skills.

The only suggestion I have outside of that is to simply use agents. Build them and apply them to your everyday work. Some examples are a chat agent with access to your documentation and custom resources or a background agent given some bugs to take care of on its own. This will quickly give you an understanding of where they’re effective and what capabilities they lack.

If I missed the mark, let me know what you think. This is an interesting space that’s hard to predict.

Thanks for reading!

Always be (machine) learning,

Logan

I expected a bit more from a refutation. If I understood the article, there are a few reasons why Citrini is off:

1. Historically, predictions about ai have been off. So this is a bet based on probability?

2. Software construction is poorly understood by those who don’t practice it. What don’t they understand that refutes the argument?

3. The reporting favors success stories and doesn’t cover the presumably far more often failures. Isn’t that so for the reporting on anything?

4. AI is kind of average and agents are unreliable. Are they really average? In the universe of people writing code the mean is not a high bar.

Not saying I disagree with your intuition. I just don’t see the support for it.